TypeScript Agent Frameworks in 2026: Loop, Runtime, Sandbox

TypeScript teams shipping simple LLM powered features can get by without an agent framework. They just need a model call, a schema, and a database write. Once the model starts choosing tools, running across turns, surviving deploys, or coordinating with other agents you can start looking for a framework. Here's a useful mental model when choosing one for your TS projects.

There are three layers a framework can provide, and they solve different problems:

| Layer | What it does | What you'd ask for |

|---|---|---|

| Loop | Wraps the agent loop so you don't write while (!done) {…} | "Let the model decide what to do next." |

| Runtime | Keeps the loop alive across crashes, deploys, and long pauses | "Keep the work alive when servers die." |

| Sandbox | Runs an existing coding agent in isolation, often in parallel | "Run ten Claude Codes in ten boxes." |

The freshest of the three is the sandbox layer, where teams are no longer building agents from scratch, they are wrapping already-good coding agents and running them in parallel against real repos. To find the right tool for the job, ask yourself these questions.

- Am I building an agent loop? You want a loop framework.

- Do I already have a loop and need it to survive production? You want a runtime.

- Do I already have a coding agent and want to run many of them against a repo? You want a sandbox harness.

If none of those describe your work, and you are just calling a model, parsing JSON, and writing to a database, you probably need none of this. Most teams should land on at most two of these layers, often just one. This post walks through all three as of May 2026, names the load-bearing tools in each, and ends with a picker table and a worked example. The taxonomy is the product. The tools are the inventory.

Status quo: a pipeline, not an agent

Here is a representative LLM pipeline at a typical TS product team in 2026. Pretend it is a news-desk job that processes incoming articles from an RSS feed:

// app/jobs/process-incoming-article.ts

import { generateObject } from "ai";

import { z } from "zod";

import { db, articles } from "@/db";

const ArticleMeta = z.object({

title: z.string(),

summary: z.string().max(280),

tags: z.array(z.string()).max(5),

mentioned_tools: z.array(z.string()),

sentiment: z.enum(["positive", "neutral", "negative"]),

});

export async function processArticle(rawHtml: string, sourceUrl: string) {

const { object } = await generateObject({

model: "anthropic/claude-haiku-4-5",

schema: ArticleMeta,

prompt: `Extract structured metadata from this article:\n\n${rawHtml}`,

});

await db.insert(articles).values({

source_url: sourceUrl,

...object,

processed_at: new Date(),

});

}

A short file. One model call. Schema-validated structured output. Database write. Ship it.

This pattern covers a wide range of real production LLM work in TypeScript right now: classification, extraction, summarization, embedding prep, tagging, moderation, content rewriting. It is not an agent. It is a pipeline. The model does one thing and returns. There is no loop. There is no tool the model picks. There is no retry the model decides on. Your code drives the LLM, not the other way around.

If your job today is shaped like the snippet above, an agent framework probably adds more surface area than value. The Vercel AI SDK already gives you generateObject, structured output, streaming, and provider switching, and that is genuinely all you need for the 80% case. Spending two weeks adopting a graph-based agent framework to power a tagging job is a category error.

So when does a pipeline become an agent? Roughly when:

- The model decides which tool to call next (not your code).

- The work runs across many turns rather than in one shot.

- The work needs to survive crashes, deploys, or human-approval pauses lasting hours or days.

- The work needs to coordinate across multiple specialist models or sub-agents.

When any one of those four shows up in your spec, the single generateObject call no longer suffices. You need a loop, you need state, and you probably need a runtime to host the loop so it doesn't die when your serverless function times out at 300 seconds.

That is when you start shopping. And the moment you do, you discover the TS agent ecosystem is not one market but three.

The Loop: Building the Agent

You write the agent: model, instructions, tools, stop condition. The framework wraps the loop so you do not have to write while (!done) { … } yourself.

This is the most crowded shelf. The eight serious contenders all wrap the same fundamental thing, generateText plus a tool-calling loop, but they differ sharply on the design bets they take on top.

Mastra

What it is: Mastra is a batteries-included TypeScript agent framework, built by the original Gatsby.js team.

Best fit: You want a Vercel/Next.js-friendly stack and prefer batteries-included over assembling your own.

Avoid when: You already have strong infra opinions and only need a thin agent loop.

Why it matters: Bundles loop, memory, RAG, evals, and observability behind one @mastra/core. Provider-agnostic via the Mastra Model Router (3,300+ models, 94 providers, automatic fallback). Rides on Vercel's AI SDK underneath. Deployer packages for Vercel, Cloudflare Workers, and Netlify mean it scales to zero. Observability via Mastra Studio.

import { Agent } from "@mastra/core/agent";

const agent = new Agent({

id: "support-agent",

name: "Customer Support Agent",

instructions: "You are a helpful customer support assistant.",

model: "anthropic/claude-sonnet-4-6",

tools: { ticketLookup, orderStatus, refundProcessor },

});

Vercel AI SDK with Agent

What it is: The Vercel AI SDK is one of the most-downloaded TS AI libraries, and v6 ships a stable Agent interface alongside its generateText / streamText primitives.

Best fit: You already use the SDK for streaming/UI work and want the smallest step up.

Avoid when: You need deep durable-workflow features outside the Vercel ecosystem.

Why it matters: ToolLoopAgent is the default class implementation; the Agent symbol itself is an interface, so other libraries can ship their own. Defaults include stopWhen: stepCountIs(20), tool-execution approval gates (Tool.needsApproval), and OAuth for MCP clients via @ai-sdk/mcp. For long-running durable agents, the answer is DurableAgent in Vercel Workflows, which is approaching but not yet at full parity with ToolLoopAgent (issue #168 tracks the gaps). Synchronous agents are capped at 300 seconds on Vercel's Pro tier.

import { ToolLoopAgent } from "ai";

const sandboxAgent = new ToolLoopAgent({

model: "openai/gpt-5.4",

system: "You are an agent with access to a shell environment.",

tools: {

shell: openai.tools.localShell({

execute: async ({ action }) => {

const [cmd, ...args] = action.command;

const sandbox = await getSandbox();

const command = await sandbox.runCommand({ cmd, args });

return { output: await command.stdout() };

},

}),

},

});

OpenAI Agents SDK

What it is: The OpenAI Agents SDK for JS is the minimalist pick: four primitives (Agents, Handoffs, Tools, Guardrails), no graph DSL.

Best fit: You live in the OpenAI ecosystem, want minimal abstractions, or need voice and realtime as first-class concerns.

Avoid when: Provider neutrality is a hard requirement.

Why it matters: Coordination happens via explicit handoffs=[], with two named patterns: peer handoffs (control transfers) and manager-style orchestration (others as tools, coordinator stays in charge). OpenAI-hosted tools are first-class citizens (webSearchTool, fileSearchTool, codeInterpreterTool, imageGenerationTool), which other frameworks have to wrap or rebuild. Provider-neutral in theory via the Model interface, OpenAI-native in fact via the built-in classes. Voice and Realtime ship with WebRTC, SIP, and WebSocket transports out of the box.

import { Agent, run } from "@openai/agents";

const agent = new Agent({

name: "Assistant",

instructions: "You are a helpful assistant",

});

const result = await run(

agent,

"Write a haiku about recursion in programming.",

);

console.log(result.finalOutput);

Claude Agent SDK

What it is: The Claude Agent SDK ships Claude Code itself as a programmable library, not an abstract agent framework.

Best fit: Your job looks like Claude Code, not like generic chat: code-, tool-, or runtime-heavy work.

Avoid when: Your work has nothing to do with code or shell tools.

Why it matters: Same tools, same agent loop, same context management as Claude Code. The TypeScript package bundles a native Claude Code binary as an optional dependency, so installing @anthropic-ai/claude-agent-sdk puts the actual Claude Code runtime in node_modules/. Built-in tools, lifecycle hooks, subagents, MCP server integration, sessions with resume/fork/rewind, and .claude/skills/*/SKILL.md skills loaded from the filesystem (the same format that hit #1 on GitHub trending via mattpocock/skills). Multi-provider auth: Anthropic API, Bedrock, Vertex AI, Azure.

import { query } from "@anthropic-ai/claude-agent-sdk";

for await (const message of query({

prompt: "Find and fix the bug in auth.ts",

options: { allowedTools: ["Read", "Edit", "Bash"] },

})) {

console.log(message);

}

LangGraph (TypeScript)

What it is: LangGraph builds agents as graphs, not loops, with explicit nodes, edges, state, and first-class checkpointing.

Best fit: Use only when the workflow is genuinely graph-shaped.

Avoid when: The problem is not graph-shaped, or when you need to scale to zero (LangGraph's persistence-first model does not).

Why it matters: Inspired by Google Pregel and Apache Beam. Deliberately low-level. The two-layer story is now explicit: use createAgent in the langchain package for a fast start; drop down to LangGraph's StateGraph when you need real graph control. If you reach for LangGraph proper, you reach for it because the problem is graph-shaped, not because you want a five-line agent. Pair with LangSmith Deployment for managed deployment.

import { StateGraph, START, END, Annotation } from "@langchain/langgraph";

import { ChatAnthropic } from "@langchain/anthropic";

const State = Annotation.Root({

messages: Annotation<string[]>({ reducer: (a, b) => a.concat(b) }),

});

const llm = new ChatAnthropic({ model: "claude-3-7-sonnet-latest" });

const graph = new StateGraph(State)

.addNode("chat", async (state) => ({

messages: [(await llm.invoke(state.messages.join("\n"))).content as string],

}))

.addEdge(START, "chat")

.addEdge("chat", END)

.compile();

AgentKit from Inngest

What it is: AgentKit is a thin agent layer on top of Inngest's durable execution engine, where every model or tool call is a checkpointed Inngest step.

Best fit: Use when durability is the reason you are here.

Avoid when: You do not want Inngest as your durability substrate.

Why it matters: The differentiator is state-based deterministic routing. Your router function is plain TypeScript that reads typed state from network.state.kv and decides which agent goes next. The README explicitly recommends code-based routing as the starting point, with LLM-based routing as an upgrade path, the opposite of how most agent frameworks frame the choice.

import { anthropic, createAgent, createNetwork } from "@inngest/agent-kit";

const neonAgent = createAgent({

name: "neon-agent",

system: "You are a helpful assistant who helps manage a Neon account.",

tools: [

/* … */

],

mcpServers: [

{ name: "neon", transport: { type: "streamable-http", url: "..." } },

],

});

const network = createNetwork({

name: "neon-agent",

agents: [neonAgent],

defaultModel: anthropic({ model: "claude-3-5-sonnet-20240620" }),

router: ({ network }) =>

network?.state.kv.get("answer") ? undefined : neonAgent,

});

VoltAgent

What it is: VoltAgent is the youngest of the batteries-included frameworks at the time of writing, structurally similar to Mastra: TypeScript core paired with a console for the production layer.

Best fit: Use when the console matters as much as the framework.

Avoid when: You want minimal platform surface area, or production track record matters more than feature breadth.

Why it matters: The differentiator is the scope of the console. VoltOps Console covers a broader surface than Mastra Studio: observability, evals, prompts management, guardrails, and deployment, in one place, cloud or self-hosted. Pluggable memory adapters, supervisor and sub-agent runtime, workflow engine with typed suspendSchema and resumeSchema for human-in-the-loop pause and resume. The model is supplied via @ai-sdk/openai, so VoltAgent rides on Vercel's AI SDK provider abstraction.

import { VoltAgent, Agent, Memory } from "@voltagent/core";

import { LibSQLMemoryAdapter } from "@voltagent/libsql";

import { honoServer } from "@voltagent/server-hono";

import { openai } from "@ai-sdk/openai";

const agent = new Agent({

name: "my-agent",

instructions: "A helpful assistant that can check the weather.",

model: openai("gpt-4o-mini"),

tools: [weatherTool],

memory: new Memory({

storage: new LibSQLMemoryAdapter({ url: "file:./.voltagent/memory.db" }),

}),

});

new VoltAgent({ agents: { agent }, server: honoServer() });

Google ADK for TypeScript

What it is: Google's Agent Development Kit for TypeScript is a code-first port of Google's Python ADK, optimized for Gemini and Vertex AI but not locked to them.

Best fit: You are deep in Vertex AI and willing to ride a Pre-GA port.

Avoid when: The work matters in production and Pre-GA risk is unacceptable.

Why it matters: The README is upfront that the SDK is Pre-GA and "available 'as is' [with] limited support." The sibling SDKs (Python, Java, Go, Web) are the source of truth; the TS port is a follower. Cloud Run is the primary deployment target.

import { LlmAgent, GOOGLE_SEARCH } from "@google/adk";

const rootAgent = new LlmAgent({

name: "search_assistant",

description: "An assistant that can search the web.",

model: "gemini-2.5-flash",

instruction:

"You are a helpful assistant. Answer user questions using Google Search when needed.",

tools: [GOOGLE_SEARCH],

});

How to pick a loop framework

| Need | Default pick | Why | Watch out |

|---|---|---|---|

| Simple TS agent loop, smallest step up | Vercel AI SDK | Already use the SDK, want minimal new surface area | Durability needs Workflows + DurableAgent |

| Full TS agent app framework | Mastra | Loop, memory, RAG, evals, observability all in one core | More ecosystem commitment |

| Job looks like Claude Code | Claude Agent SDK | Same loop that powers Claude Code, programmable from TS | Bias toward code- and tool-heavy work |

| Workflow is truly graph-shaped | LangGraph | Explicit state, edges, checkpointing | More ceremony; does not scale to zero |

| Durability is the reason you are here | AgentKit | Every step is a checkpointed Inngest step | Tied to the Inngest model |

| OpenAI-native or voice-first | OpenAI Agents SDK | Handoffs and manager-style patterns built in; voice is core | Provider-neutral in theory, OpenAI-native in fact |

| Console matters as much as the framework | VoltAgent | Wider console surface than Mastra Studio | Newer; less production track record |

| Vertex AI shop, Pre-GA OK | Google ADK | Code-first port of Google's mature Python ADK | Pre-GA, "as is" support |

If you are starting fresh on a Next.js shop with no strong opinions, the realistic shortlist is Mastra (if you want batteries) or Vercel AI SDK with Agent (if you want to stay closer to the metal).

Recommended

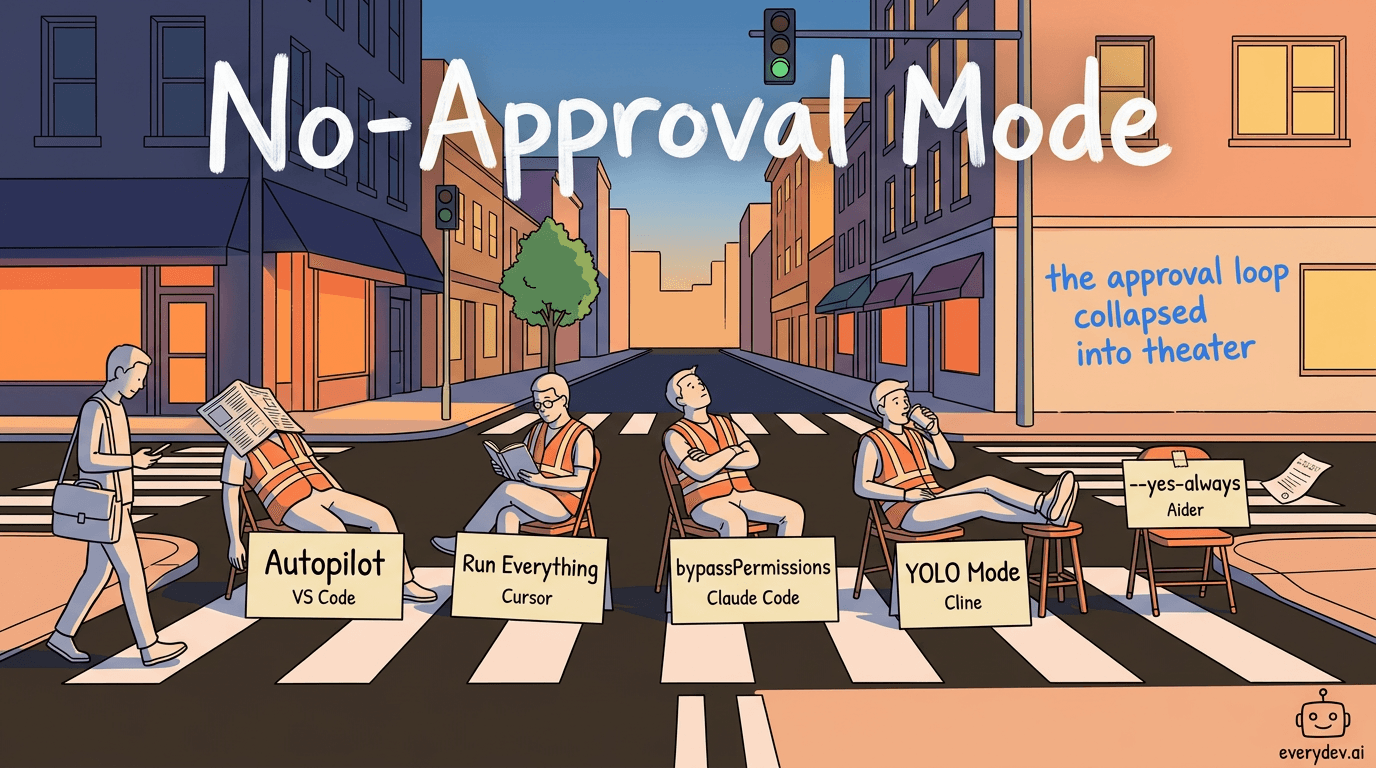

Every Major AI Coding Tool Now Has a No-Approval Mode

You ask your coding agent to scaffold a project. It creates files, installs packages, runs setup commands, and starts fixing import errors. Somewhere around the eighth "Continue" click, you stop reading what it's asking.…

Read nextThe Runtime: Hosting the Agent

The runtime is the layer most often confused with the loop itself. These tools do not give you new loop primitives. They give you somewhere to put the loop you already wrote: webhook ingress, scheduling, memory, durable state across deploys, autoscaling, observability for runs that take minutes or hours.

A runtime starts mattering once your agent stops fitting in a single 300-second function call.

Flue

What it is: Flue is a framework-agnostic agent harness from the Astro team, tagline "The Agent Harness Framework."

Best fit: You want a framework-agnostic harness with a configurable sandbox story and skills-as-source-of-truth.

Avoid when: You need a mature production runtime; the README is candid that this is experimental.

Why it matters: Bet borrowed from Claude Code: agent logic should live in Markdown skills and AGENTS.md files, not TS classes. Default sandbox is virtual via just-bash (no container, faster and cheaper to spin up). Real isolation via the Daytona connector. Deployment targets: Node, Cloudflare Workers, GitHub Actions, GitLab CI/CD.

// .flue/agents/hello-world.ts

import type { FlueContext } from "@flue/sdk/client";

import * as v from "valibot";

export const triggers = { webhook: true };

export default async function ({ init, payload }: FlueContext) {

const agent = await init({ model: "anthropic/claude-sonnet-4-6" });

const session = await agent.session();

const result = await session.prompt(

`Translate this to ${payload.language}: "${payload.text}"`,

{

result: v.object({

translation: v.string(),

confidence: v.picklist(["low", "medium", "high"]),

}),

},

);

return result;

}

LangSmith Deployment

What it is: LangSmith Deployment is the matched runtime for LangGraph agents.

Best fit: You wrote your agent in LangGraph and want the matched runtime.

Avoid when: You are not using LangGraph; the platform is tightly coupled to the graph abstraction.

Why it matters: Managed durable execution, threads, cron, memory APIs, autoscaling task queues, three deployment modes (Cloud SaaS, Hybrid, Self-hosted on Kubernetes). Pricing is bundled with LangSmith on a shared billing surface: free Developer tier covers self-hosted prototyping at 5,000 traces/month; Plus is $39/user/month (capped at 10) plus per-run usage ($0.005/run + standby minutes); Enterprise is custom.

{

"node_version": "20",

"dependencies": ["."],

"graphs": { "agent": "./src/agent.ts:graph" },

"env": ".env"

}

Mastra Platform

What it is: Mastra Platform is what you run when you write your agent in Mastra.

Best fit: You wrote your agent in Mastra and want the same vendor on the same release train.

Avoid when: You do not want vendor coupling; the framework also runs fine on generic deployer adapters.

Why it matters: Bundles Mastra Studio (evals, logs, traces, datasets, metrics), Mastra Server (deploy agents and workflows), and Memory Gateway (managed agent memory). Pricing: free Starter tier with unlimited users and deployments. Studio and Memory Gateway each have a $250/team/month Teams tier, metered separately. Enterprise is custom.

Claude Managed Agents

What it is: Claude Managed Agents is Anthropic's hosted runtime: "Claude Code as a service" to the Agent SDK's "Claude Code as a library." Currently in beta.

Best fit: You want Anthropic-hosted execution with rubric-graded outcomes, versioned memory, or multi-agent orchestration.

Avoid when: You need a native scheduler; you'll end up wiring up Trigger.dev or Inngest anyway.

Why it matters: Three primitives have no peer in the runtime layer. Outcomes (research preview) defines "done" via a markdown rubric, then provisions a separate-context grader agent that scores each iteration and sends the work back if it fails. Memory mounts versioned, audit-trailed text stores into the container at /mnt/memory/, with optimistic-concurrency content_sha256 preconditions and a redaction endpoint for compliance. Multiagent (research preview) lets a coordinator delegate to context-isolated threads in the same shared container. No native cron or webhook triggers, though: scheduling lives in Claude Code Routines (1-hour minimum interval), or you wire up Trigger.dev or Inngest. The local DX is unusually good for Claude Code users: as of v2.1.96+, Claude Code ships a Managed Agents skill that runs a conversational scaffolding flow, no web console required.

const session = await client.beta.sessions.create({

agent: agent.id,

environment_id: environment.id,

});

await client.beta.sessions.events.send(session.id, {

events: [

{

type: "user.message",

content: [

{ type: "text", text: "List the files in the working directory." },

],

},

],

});

Inngest

What it is: Inngest is the durable-execution backbone that AgentKit was designed around, framework-agnostic and equally good for non-agent durable workloads.

Best fit: You want a framework-agnostic durable runtime with native cron, retries, and step-level observability.

Avoid when: You want everything (loop + runtime) bundled in one vendor product.

Why it matters: Your function code lives in your own infra (Vercel, Lambda, a long-running Node server). Inngest is the orchestration plane that calls your HTTP endpoint with checkpointed state, retries on failure, and gives you step-level observability. Hobby tier: 50,000 executions/month, 5 concurrent steps, no card. Pro from $75/month for 1M executions. Pay-as-you-go beyond that scales down to $0.000015 per execution above 50M.

import { Inngest } from "inngest";

const inngest = new Inngest({ id: "my-app" });

export const summarize = inngest.createFunction(

{ id: "summarize-doc" },

{ event: "doc/uploaded" },

async ({ event, step }) => {

const text = await step.run("fetch", () => fetchDoc(event.data.url));

const summary = await step.ai.infer("summarize", {

/* … */

});

await step.sleep("wait", "1h");

return summary;

},

);

How to pick a runtime

| Need | Default pick | Why | Watch out |

|---|---|---|---|

| You wrote your agent in Mastra | Mastra Platform | Studio, Server, and Memory Gateway in one; same vendor, same release train | Vendor coupling; the framework also runs fine on generic deployer adapters |

| You wrote your agent in LangGraph | LangSmith Deployment | Matched durable execution, threads, cron, memory APIs, three deploy modes | Tightly coupled to the graph abstraction |

| You want Anthropic-hosted execution with rubric grading | Claude Managed Agents | Outcomes, Memory, and Multiagent primitives have no peer here | No native cron or webhook triggers; you wire up Trigger.dev or Inngest |

| You want a framework-agnostic durable runtime | Inngest | Native cron, retries, step-level observability; runs on your infra | Workflow runner, not a loop framework — you bring the loop |

| You want skills-as-source-of-truth with a swappable sandbox | Flue | Markdown skills + AGENTS.md model; pluggable via just-bash or Daytona | Experimental per the README; not a mature production runtime yet |

The runtime layer is not really agent-specific infrastructure. It is durable workflow infrastructure that happens to host agents well. Trigger.dev also fits here for non-agent workloads. The line between "workflow runner" and "agent runner" is blurrier than the marketing suggests. If you have already committed to a loop framework, the matched host is the path of least friction. If you have not, Inngest or Flue gives you optionality.

The Sandbox: Scaling a Coding Agent

The sandbox layer is the surprise of 2026 and probably the most interesting story in the category. You are not building an agent from scratch. You are wrapping an already-very-good coding agent (Claude Code, Codex, Cursor's agent) and running it sandboxed, often in parallel, often against a real repo. As of May 2026, this category is roughly six months old: a growing awesome-list of category-defining tools, an OpenAI conference talk titled "Harness Engineering: How to build software when humans steer and agents execute", and a Matt Pocock launch video for one of the most visible tools in the space, already past 50K views.

The reframing the category turns on is simple. An agent is more than the model. It is the model plus the scaffolding around it: skills, sub-agents, planning artifacts, verification loops, memory, sandboxes. That scaffolding is now the part that determines whether the agent succeeds or fails on real work.

PY's "Rethinking AI Agents" opens with the receipt: by modifying only the harness layer, a coding agent jumped from outside the top 30 to rank five on Terminal Bench 2. Same model. Different harness. Twenty-five-rank delta. That is the bet the sandbox layer is making with money.

The cultural setup matters too. In February 2026, mattpocock/skills hit #1 on GitHub trending and is now well past 50,000 stars. Once everyone had the same .claude directory contents, the next obvious question was: how do I run these scripts in parallel against a repo while I am away from the keyboard? That is what the sandbox layer answers.

Sandcastle

What it is: Sandcastle is Matt Pocock's TS library for orchestrating sandboxed AI coding agents, with sandbox providers (Docker, Podman, Vercel Sandbox, your own) as swappable adapters.

Best fit: You have a Claude Code workflow that works locally and want to fan it out against a repo while you sleep.

Avoid when: You need a hosted runtime; Sandcastle is a library, not a platform.

Why it matters: Pocock's framing in the launch video was that he wanted "a simple TypeScript function that I could run and just say, 'Run this prompt inside this sandbox using this agent'", found nothing that matched, and built it himself. npx sandcastle init scaffolds a .sandcastle/ directory; branch strategies are first-class (head, branch, merge-to-head); worktree variants (wt.run) let you run multiple agents in parallel against the same repo without stepping on each other.

import { run, claudeCode } from "@ai-hero/sandcastle";

import { docker } from "@ai-hero/sandcastle/sandboxes/docker";

await run({

agent: claudeCode("claude-opus-4-6"),

sandbox: docker(), // or podman(), vercel(), or your own provider

promptFile: ".sandcastle/prompt.md",

});

Flue (in coding-agent / Daytona mode)

What it is: Same Flue from the runtime section, but the Daytona example backs the agent with a real Linux container so it can git clone, npm install, and modify a repo.

Best fit: You want a hosted variant of the Sandcastle pattern, with the same harness model as your other Flue agents.

Avoid when: You need branch-aware orchestration out of the box; you will have to write that yourself.

Why it matters: Same trigger model as in the runtime section (webhook: true), same init({ sandbox }) shape. Flue is the runtime; the AFK-orchestration logic is on you. Flue does not manage branch strategies or commit back to the head the way Sandcastle does.

import type { FlueContext } from "@flue/sdk/client";

import { Daytona } from "@daytona/sdk";

import { daytona } from "@flue/connectors/daytona";

export const triggers = { webhook: true };

export default async function ({ init, payload, env }: FlueContext) {

const client = new Daytona({ apiKey: env.DAYTONA_API_KEY });

const sandbox = await client.create();

const agent = await init({

sandbox: daytona(sandbox),

model: "openai/gpt-5.5",

});

const session = await agent.session();

await session.shell(`git clone ${payload.repo} /workspace/project`);

await session.shell("npm install", { cwd: "/workspace/project" });

return await session.prompt(payload.prompt);

}

E2B

What it is: E2B is one of the earliest sandbox primitives in this space, built by FoundryLabs and around since early 2023.

Best fit: You are building something custom and just need a fast, well-known sandbox primitive.

Avoid when: Your agent runs need to live longer than 24 hours.

Why it matters: AI agents need a code-interpreter sandbox the way humans need a terminal. Make it a 150ms primitive and let the agent loop layer above it. The meaningful operational constraint is a 24-hour session ceiling, which Northflank's sandbox shootout calls out specifically for long-horizon coding agents. Apache-2.0.

import { Sandbox } from "@e2b/code-interpreter";

const sandbox = await Sandbox.create();

await sandbox.runCode("x = 1");

const execution = await sandbox.runCode("x+=1; x");

console.log(execution.text);

Daytona

What it is: Daytona is the "agent experience first" sandbox, built around the explicit thesis "Agent Experience Is the Only Experience That Matters".

Best fit: You want a sandbox optimized for agent-driven workloads and persistent state across parallel runs.

Avoid when: You need a head-to-head with E2B from published benchmarks; Northflank's shootout does not include Daytona or Vercel Sandbox.

Why it matters: Humans are no longer the primary sandbox user; agents are, and the runtime should be optimized accordingly. Long-running, stateful, persistent across parallel runs. Daytona is the substrate that Flue's coding-agent mode relies on, and it is also where many teams running their own AFK pipelines for Claude Code and Codex have ended up.

import { Daytona } from "@daytonaio/sdk";

const daytona = new Daytona();

const sandbox = await daytona.create();

const response = await sandbox.process.exec("echo 'Hello, World!'");

console.log(response.result);

await sandbox.delete();

How to pick a sandbox harness

| Need | Default pick | Why | Watch out |

|---|---|---|---|

| Fan a working Claude Code workflow out against a repo | Sandcastle | TS-native; branch strategies and worktree variants are first-class | Library, not a platform; you provide the runtime |

| Hosted variant of the Sandcastle pattern | Flue with Daytona | Same harness model as your other Flue agents; container-backed | No built-in branch orchestration; write that yourself |

| Fast, well-known sandbox primitive for custom builds | E2B | 150ms cold start; Apache-2.0; battle-tested since early 2023 | 24-hour session ceiling makes long-horizon coding runs awkward |

| Sandbox optimized for agent workloads with persistent state | Daytona | Long-running, stateful, persistent across parallel runs | No published head-to-head benchmarks vs E2B yet |

This space is forming in real time. The taxonomy hasn't settled; the vocabulary hasn't settled; even the awesome-list defining the category is six weeks old. What is clear is the direction of travel: the sandbox is becoming a swappable adapter under the harness, the harness is becoming a swappable adapter under the agent, and the agent (Claude Code, Codex) is staying still while everything else moves around it.

For most TS shops, the sandbox layer is probably overkill today. The shops that need it are the ones that already have a Claude Code workflow that works locally, want to fan it out to ten parallel agents against a repo while everyone is asleep, and are willing to manage the merge conflicts the next morning.

The decision tree

The three questions, with default picks. Most teams should land on at most two of these layers, often just one.

1. Are you building an agent loop? → A loop framework. The default shortlist is Mastra (batteries-included) or Vercel AI SDK with Agent (minimal new surface area). Reach further afield only with specific constraints, see the picker table in the loop section.

2. Do you have agent code and need to deploy it as a service? → A runtime. The default depends on what you wrote: Mastra Platform for Mastra, LangSmith Deployment for LangGraph, Claude Managed Agents for Anthropic-hosted execution. Framework-agnostic: Inngest or Flue.

3. Do you have a coding agent that already works and want to fan it out? → A sandbox harness. Sandcastle is the cleanest TS-native fit. Flue with Daytona if you want a hosted variant. E2B or Daytona directly if you only need the sandbox primitive.

A worked example

Suppose you want a support agent that can look up orders, issue refunds, and pause for manager approval before any refund over $500. The temptation is to look at the three layers and pick one of each. That is how shops end up paying Mastra Platform, Inngest, and LangSmith Deployment on the same project.

The actual decisions:

- Is the agent loop complex? No. A Mastra or Vercel AI SDK Agent with three tools and a stop condition is enough. That is your loop choice.

- Does it need durable approval pauses? Yes. Put the agent on Inngest (or Vercel Workflows +

DurableAgent) so the work survives the human taking 2 hours to respond. That is your runtime choice. - Is it modifying a repo in a sandbox? No. The sandbox layer is irrelevant.

One loop + one runtime, no sandbox. Two vendors, not three.

And the honest take

Most TS shops reading this might not need any of these yet. The single generateObject call from the cold-open still does an enormous amount of work for an enormous number of teams.

The point isn't go pick a framework. The point is: when the time comes, know what you are picking between. The loop is the agent. The runtime hosts the loop. The sandbox fans out an existing coding agent. Mixing them up is how shops end up paying for three vendors that each solve a different problem.

What this leaves out

Out of scope on purpose: no-code builders (n8n, Lindy, Gumloop, OpenAI Agent Builder) target a different audience; Python-only frameworks (CrewAI, AutoGen, Pydantic AI) aren't TS; the broader sandbox layer (Northflank, Modal, Blaxel, Runloop) overlaps E2B and Daytona — see Northflank's sandbox shootout (vendor-authored caveat); the Claude Code framework wars (Superpowers, gstack, GSD, Ruflo, BMAD) are upstream — see Five Claude Code Frameworks Compared.

Further reading

- Speakeasy: agent framework comparison — cleanest published taxonomy.

- Generative.inc: Mastra AI complete guide — thorough single-framework deep dive.

- Northflank: Best sandboxes for coding agents — sandbox benchmarks (vendor-authored).

- ai-boost/awesome-harness-engineering — category-definition list.

- OpenAI: Harness Engineering talk — the talk that named the discipline.

- Matt Pocock: I Open-Sourced My Own AFK Software Factory — Sandcastle launch.

Comments

No comments yet

Be the first to share your thoughts